Project Personnel: Justin Rufa

For unmanned aircraft systems (UAS) to effectively conduct missions in urban environments, a multi-sensor navigation scheme must be developed that can operate in Global Positioning System (GPS) dead zones common in most urban environments filled with large concrete, steel, glass, and brick structures. Such solutions can also transfer to areas of GPS denial. One part of a potential solution is used by 82 percent of American adults everyday. The Long Term Evolution (LTE)-capable smart phones they carry along with the perpetually growing and improving cellular networks in major cities may provide the UAS with sufficient accuracy for approximate positioning in environments where GPS is either unavailable or generally inaccurate.

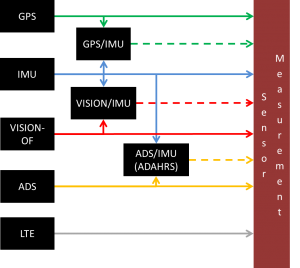

This thesis develops a framework for a user-specific UAS guidance, navigation, and control model and an urban environment model to test combinations of navigation sensors including an LTE transceiver and computer vision sensor to complement the traditional GPS receiver, inertial sensors, and an air data system. Availability and accuracy of each sensor signal is extracted from the literature.

Two signal filtering algorithms, the Extended Kalman Filter (EKF) and Ensemble Kalman Filter (EnKF), are applied to different combinations of signals in the urban environment using matching models to understand how position accuracy is affected for each algorithm, environment, and sensor availability scenario. The EKF is also applied to a sensor suite using mismatched true propagation and filter models to emulate realistic conditions. A location based logic model is proposed to determine sensor availability and accuracy for a given type of urban environment based on a map database as well as real-time sensor inputs and filter outputs.

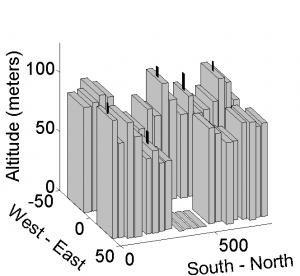

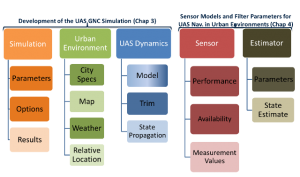

A MATLAB dynamics simulation of the vehicle, environment, sensors, and filters was developed and used to collect data from an extensive set of Monte Carlo simulations. It is organized as shown in the figure below with 5 different data classes including simulation, urban environment, uas dynamics, sensor, and estimator. Each can be configured to meet the user’s needs in terms of environment layout, vehicle dynamics, sensor types and performance specifications, and state estimation filters.

FUTURE WORK

- Sensors

- Use of onboard LiDAR for very accurate local ranging measurements when available

- Use of beacons as another inertial position measurement source

- Use of downward facing vision sensor (street markings)

- Further granulize GPS/LTE data

- More robust GPS/LTE network-receiver modeling to account for effects of specific buildings/features on the signals

- Experimental verification of models (Possible for GPS/More difficult for LTE due to proprietary nature of networks)

- State Estimation Filters

- Particle Filtering weighting algorithms based on measurement confidence

- Urban Environment

- Addition of wind models to add more realism to expected vehicle disturbances when flying in the urban environment

- Addition of other urban features such as overpasses, skybridges, monuments, and parks

- Use of real-world open-source map/building information for cities such as NYC, London, Tokyo, Shanghai, Hong Kong, etc

- UAS Dynamics

- Use of flight-test data (both noisy and ground truth) to better characterize form of process noise covariance matrix to assist in filter tuning

MATLAB SIMULATION SOURCE CODE

The object-oriented MATLAB source code for the simulation used for this research is available for download in the zip file below. Upon unzipping the file in the appropriate MATLAB directory, it can be executed by running the ../uas_sim_execute m file. Simulation parameters are set in the ../core/initialization/set_sim_parameters_options m file and sensor properties are set in the ../core/initialization/set_sensor_properties m file. A detailed user manual is coming soon.

Code download: urban_simulation